Our Research

Reinforcement Learning

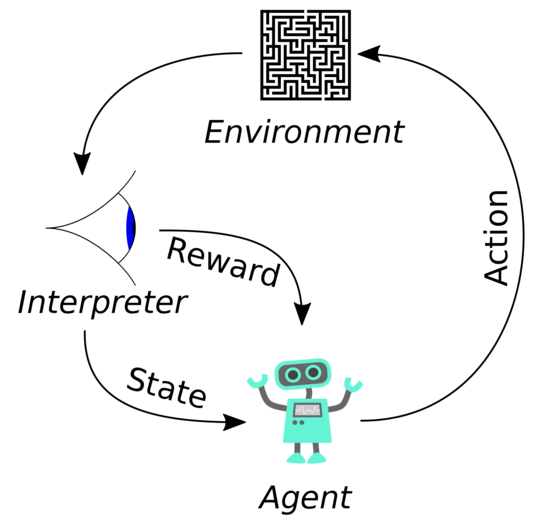

In the reinforcement learning framework, the agent interacts with an environment and learns to select the best action sequence in order to achieve a predefined goal. The environment provides a performance evaluation by emitting a reward for optimization. The agents task is to maximise future reward by selecting the best available action. In concrete terms, this can for example be a computer program (agent) that plays an Atari game (environment) by trying to reach the highest possible score (emitted reward).

There are two approaches for reinforcement learning: (i) model-based reinforcement learning and (ii) model-free reinforcement learning.

In model-based reinforcement learning a (world) model is learned to accurately capture the environment mechanism. With this ability an agent is able to evaluate future outcomes by simulating them.

In model-free reinforcement learning the agent is learned directly on the experience gained by acting in the environment.

Our Publications on Reinforcement Learning:

- Wagner, Stefan, Michael Janschek, Tobias Uelwer, and Stefan Harmeling. "Learning to Plan via a Multi-step Policy Regression Method." In International Conference on Artificial Neural Networks, pp. 481-492. Springer, Cham, 2021.

- Robine, Jan, Tobias Uelwer, and Stefan Harmeling. "Smaller world models for reinforcement learning." arXiv preprint arXiv:2010.05767 (2020).

Inverse Problems

An inverse problem is the problem of finding an input to a system that produces a (given) set of observations. Depending on the definition of the system, inverse problems occure in different forms. Illustrative examples are (i) image denoising, where the task is to remove (additive) noise from an image, and (ii) image deblurring, which asks to remove image blur introduced, for example, by camera-shakes or poor focussing.

Many inverse problems are ill-posed, i.e., the solution is usually not unique. That means, finding a "realistic" solution is often very challenging and requires prior assumptions about the image (for example, that natural images often exhibit a reasonable amount of vertical and horizontal edges).

Other instance of inverse problems in image processing are: image dehazing, image deraining, image super-resolution, compressive sensing, and phase retrieval.

Some of our publications on inverse problems:

- Germer, Thomas, Tobias Uelwer, and Stefan Harmeling. "Deblurring Photographs of Characters Using Deep Neural Networks." Inverse Problems & Imaging (to appear). 2022.

- Uelwer, Tobias, Nick Rucks, and Stefan Harmeling. "A Closer Look at Reference Learning for Fourier Phase Retrieval." In NeurIPS 2021 Workshop on Deep Learning and Inverse Problems. 2021.

- Uelwer, Tobias, Alexander Oberstraß, and Stefan Harmeling. "Phase retrieval using conditional generative adversarial networks." In 2020 25th International Conference on Pattern Recognition (ICPR), pp. 731-738. IEEE, 2021.